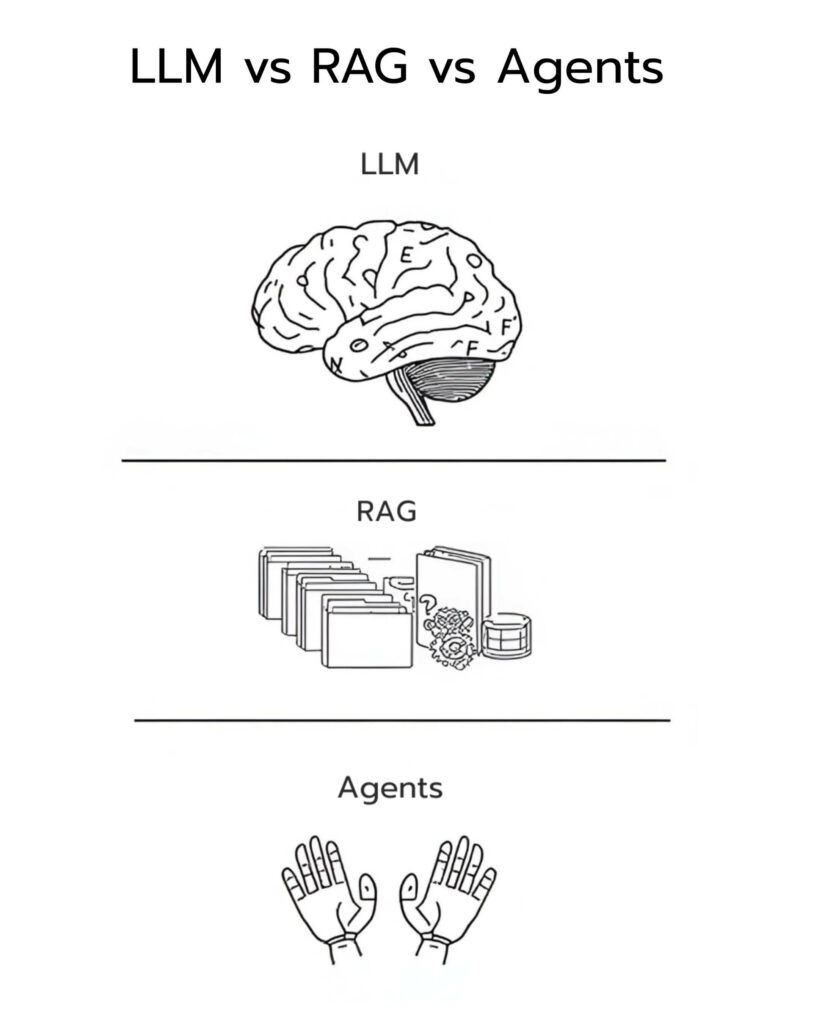

In the rapidly evolving landscape of artificial intelligence, are you feeling overwhelmed by the endless stream of new tech? Terms like LLM (Large Language Model), RAG (Retrieval-Augmented Generation), and AI Agents sound complex, but they aren’t competitors. Instead, think of them as the brain, memory, and decision-making system—together forming a complete “Intelligent Stack.” Whether you’re an AI enthusiast, a developer, or a business leader, this guide will strip away the jargon and show you how to leverage these tools effectively while avoiding common pitfalls. By the end, you’ll be ready to architect high-performance AI systems with ease. Let’s break down the relationships and use cases of these three pillars, from basics to implementation.

LLMs: The “Thinking Brain”—Brilliant, but Myopic

Imagine your AI system has a hyper-intelligent brain—this is what we call an LLM. Models like GPT-4, which powers ChatGPT, are classic examples. They can:

- Understand and generate language: Assisting with writing, explaining complex concepts, and engaging in human-like dialogue.

- Perform reasoning: Analyzing problems, synthesizing information, and crafting narratives.

Why are LLMs so powerful? Because they are trained on massive datasets to simulate human language patterns. For instance, if you ask “How do I cook the perfect pasta?” it can provide a detailed, step-by-step recipe. However, LLMs have a fatal flaw: they are “frozen in time.” Their training data has a cutoff date—GPT-4, for example, is unaware of events that occurred after its training ended. If you ask about yesterday’s stock market, it will likely guess, leading to “hallucinations.” In short, LLMs are excellent at thinking, but they know nothing about the current world. Pro tip: Use raw LLMs for creative tasks, brainstorming, or writing, but don’t rely on them for real-time or domain-specific facts—that’s where the next layer comes in.

RAG: Expanding AI Memory to “See” the Real World

No matter how smart an LLM is, it needs a “memory” to broaden its vision. This is the role of RAG (Retrieval-Augmented Generation). Think of RAG as a super-powered librarian that connects the LLM to an external knowledge base. How does it work?

- Retrieval: When you ask a question, RAG searches relevant databases (like internal company documents or live news feeds) for information.

- Augmentation: These “fresh” documents are injected into the LLM as context.

- Generation: The LLM produces an accurate answer based on that up-to-the-minute data.

The Magic of RAG

- Dynamic Updates: You can inject new knowledge without retraining the model. Ask “What is the company’s latest policy?” and RAG pulls the updated file instantly.

- Enhanced Accuracy: Answers are grounded in real sources. You can trace the documentation behind the output, significantly reducing hallucinations.

- Low-Cost Maintenance: Unlike traditional training, RAG is lightweight and highly efficient.

Example: In a customer support system, RAG connects to product manuals. When a user asks “How do I service my AC?” the AI cites the official guide directly—reliable and precise.

Fun fact: If you’re searching for “how RAG improves AI accuracy,” you’re looking at a classic RAG use case. It transforms static LLMs into “living” systems, perfect for knowledge-intensive domains like legal or medical research.

AI Agents: The “Decision-Maker” Turning Thought into Action

LLMs think and RAG remembers, but who actually does the work? AI Agents serve as the commander-in-chief. They aren’t a single tool, but a “control loop” framework wrapping LLMs and RAG to enable autonomy.

Core Mechanism of AI Agents:

- Perceiving Goals: Understanding user intent, e.g., “Research market trends and write a report.”

- Planning: Breaking tasks down into steps, like “Search, analyze, then draft email.”

- Executing: Invoking tools (browsers, APIs) to perform actual operations.

- Reflecting/Optimizing: Checking results and iterating to improve performance.

Why do we need Agents? A standalone LLM or RAG can only “answer questions”; Agents can “solve problems.” For example:

- Automated Sales Reporting: Agents use RAG to pull data, an LLM to analyze it, and then email the team.

- Smart Assistants: Booking flights, checking weather, and adjusting your itinerary autonomously.

Key Takeaway: Agents don’t replace LLMs; they “wrap” them, turning AI from a passive chatbot into an active executor.

A Perfect Partnership: The “Intelligent Stack”

Many mistakenly view LLMs, RAG, and Agents as a choice of one over the other. In reality, they are a three-layer architecture:

- LLM (Reasoning Layer): The core brain providing linguistic intelligence.

- RAG (Memory Layer): Connecting real-time knowledge to ensure accuracy.

- Agents (Action Layer): Driving autonomy for complex workflows.

In production-grade AI, these are usually stacked together:

- Pure LLM: Creative writing and simple explanations.

- LLM + RAG: Accurate Q&A based on knowledge bases.

- Full Stack (LLM + RAG + Agents): Autonomous workflows, like intelligent helpdesks or automated content creation.

Real-world case: An e-commerce company uses this stack to automate product listings—RAG pulls inventory, the LLM generates descriptions, and Agents handle publication. The result? Doubled efficiency and near-zero errors.

Future Outlook: Become an AI Architect

The future of AI isn’t one single technology, but the seamless integration of systems. LLMs think, RAG knows, and Agents act—that is the essence of true “Artificial Intelligence.”

If you’re a developer, start with open-source tools: use LangChain for Agents and Pinecone for your RAG backend. Enterprise users? Start by evaluating your internal data compatibility.